Downsizing

It has been a long time since I’ve written a blog post, and while I have a long list of things to write about, my time is unfortunately finite.

Over the past couple of years I have been making an effort to reduce the heat, power usage and noise of my lab.

A number of changes have happened and I intend to write separate posts about some of them with more detail.

Hypervisors

While the Dell R210ii servers worked very well for my needs and are certainly quiet for 1U rackmount servers, they were still rather noisy.

I also started to have issues with the age of the hardware in the form of multiple fan failures. While replacements are available they are rather expensive when considering what I paid for the servers themselves, so in the short term I used Noctua fans.

After several replacements I ultimately decided that newer hardware was in order. Rather than go for rackmount or server grade hardware again, this time around I decided to go for something smaller and more energy efficient.

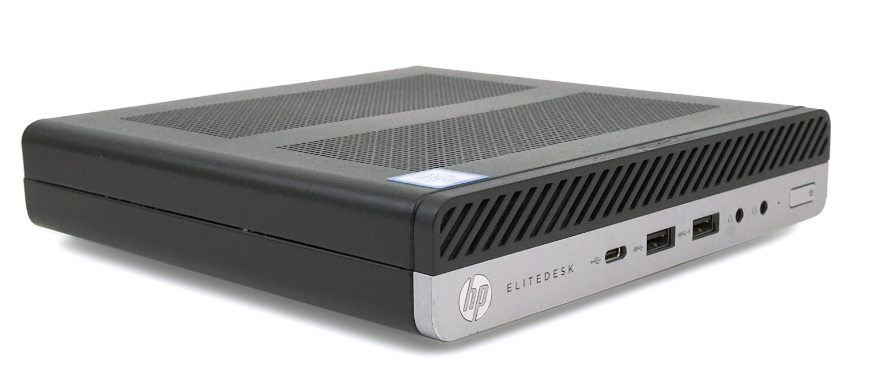

Enter the HP EliteDesk 800 G3.

Each 800 G3 was kitted out with:

- CPU: i5 6500

- RAM: 32GB

- Storage: 1 x 256GB NVME SSD + 1x 256GB 2.5” SSD

These have proved to be good replacements for the R210ii’s and while the i5s do not have Hyperthreading, this has not really caused any issues for my workloads.

I am not running RAID any more so some redundancy is lost. However the single instance applications/containers can very easily be set up with replication and HA in Proxmox. Other applications can be highly available at the application level where necessary.

Being consumer devices I accepted that I would not have iLo/iDrac. However, the HP 800 G3s do have Intel AMT which when combined with MeshCommander offer almost all of the same functionality.

NAS

The FreeNAS (now TrueNAS) server is still running well with little to no change. In the longer term, I do intend to migrate the applications running in TrueNAS jails away from the NAS and into containers on Proxmox.

The hardware is aging so at some point I will need to rebuild with something more modern. When this happens I may look to migrate to TrueNAS Scale, or potentially away from TrueNAS all together to ZFS on Linux, whether that be under Proxmox or bare metal Ubuntu.

Network and Firewall

Both the HP v1910-48G switch and SuperMicro PFSense Firewall were rather old and while neither were really causing any issues, I ultimately decided and upgrades/replacements were in order. (Especially the firewall which did not have AES-NI support.)

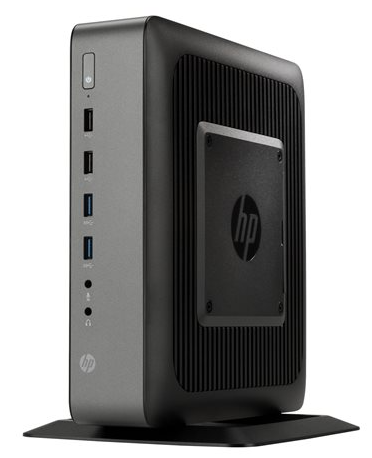

The SuperMicro 1U Rackmount PFSense firewall was replaced with a HP T620 Plus with a 4 port Intel Gigabit Nic PCIe card.

Featuring an AMD GX-420CA SOC, 4GB of RAM and a 16GB M2 SSD this was more than enough for my needs. I also decided to migrate from PFSense to OpnSense which has served me very well so far.

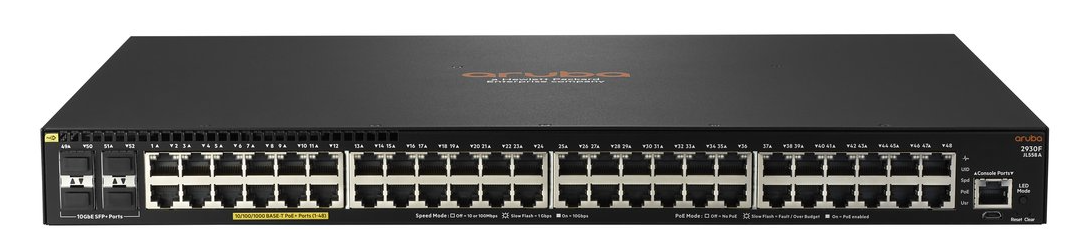

The HP V1910-48G was replaced with an Aruba 2930F-48G.

The Aruba is a significant upgrade and offers a good CLI, configuration & management via an API with an Ansible module and if desired, cloud management.

Admittedly the switch was a little noisy out of the box for my liking. However the fans were easily replaced with Noctua 40mm units and after working out the fan headers, report correct fan speed information back to the switch.

Plans

As already stated I will likely need to look into upgrading or replacing the primary NAS at some point. I intend to purchase an additional HP 800 G3 as a third node for the cluster and there may also be a need for a Proxmox server with a additional storage. (A rebuild HP Microserver may do the trick here).

The general goal is to move towards more energy efficient hardware, reduce heat and noise and implement high availability and redundancy at the application level (or with Proxmox HA where necessary) to reduce the reliance on individual cluster node reliability.

As I have been having good success with KVM and LXC, I have mostly avoided Docker/k8s in my lab. This may change going forwards and although I don’t necessarily intend to switch over, I do feel that this is an area I need to spend some time learning in more depth.